What Are Your Servers Breathing? Inside the New Science of Airflow Management

This launches our article series on the evolution of data center airflow management.

The data center industry is transforming at a pace few could have imagined. Artificial intelligence, high-performance computing, and GPU-driven workloads aren’t just bending the rules of infrastructure design; they’re rewriting them entirely. While many might call this moment a transformation, it’s clear our industry is in the midst of something more; it’s a true renaissance of the data center.

The result? A new class of facilities built to sustain unprecedented density, performance, and power.

For decades, airflow was an afterthought, a background function managed by filters, fans, and maintenance schedules. But that era is over. As racks surge from 10 kW to well over 100 kW, the air itself has become a design variable. Dust, turbulence, and contamination now translate directly into thermal inefficiency, hardware degradation, and mounting operational risk. The question is no longer how we cool, but what our servers are actually breathing.

This special report article series explores how the convergence of air and liquid cooling, intelligent environmental monitoring, and continuous airflow management is redefining performance in AI-ready data centers. It introduces the emerging science of “airflow intelligence,” a new discipline linking clean air to uptime, sustainability, and cost control. Through data, design insights, and real-world examples, it reveals how forward-thinking operators and partners like Promera are creating cleaner, more efficient environments that enable modern infrastructure to thrive.

Because in the age of AI and extreme density, success isn’t just about staying cool; it’s about breathing clean.

Introduction

The modern data center stands at the intersection of exponential digital growth and physical limitation. Artificial intelligence (AI), high-performance computing (HPC), and data-intensive analytics have redefined what it means to design, power, and sustain critical infrastructure. The transformation is no longer incremental. Because of physics and new infrastructure requirements, the changes in our facilities are now structural. Every aspect of facility engineering, from electrical distribution to the path that air takes across a GPU blade, is being rewritten in real time.

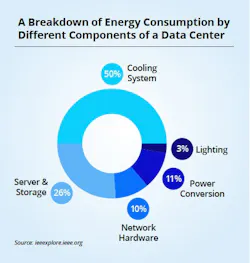

Nowhere is this change more visible, or more urgent, than in cooling and airflow. In just one year, global data center power consumption surged to 7.4 GW in 2023, a 55 percent increase over the previous year. The U.S. Department of Energy estimates that data centers accounted for 4.4% of total U.S. electricity consumption in 2023 and may reach 6.7 to 12% by 2028. Within the facility itself, cooling now represents roughly half of total energy use, up from 43 percent a decade ago. And still, demand continues to climb.

According to the 2026 AFCOM State of the Data Center Report, the average rack density has already reached 27 kW, more than tripling in less than five years and representing a 69% year-over-year jump in average rack density. Further, nearly 70% percent of operators expect further increases as AI and HPC workloads expand. Yet 85% of the world’s data centers continue to rely primarily on air to remove heat. The same air that once moved gently across low-density racks is now being forced through concentrated clusters of GPUs, drawing 60 to 100 kW, or even more, per rack.

The implications are profound. Airflow patterns once considered “good enough” can now create turbulence, recirculation, and contamination that degrade performance, drive up energy costs, and shorten the lifespan of multimillion-dollar compute assets. Dust, pressure differentials, and hot-spot formation are no longer minor maintenance issues; they are operational risk factors.

This article series examines how the physics of computing have changed and what it means for the air inside modern facilities. It explores why airflow, filtration, and environmental management have become strategic disciplines on par with power design and cooling technology. Because in a world where performance and uptime hinge on density, efficiency, and precision, the most critical question data center leaders can ask may be the simplest one: What are your servers breathing?

Addressing the Limitations of Air-Based Systems

Air has carried the thermal load of our industry for decades. But while it feels like it has happened overnight, AI has exposed its limits. The result is a spike in turbulence, recirculation, and particulate loading, all of which raise inlet temperatures, erode heat-sink efficiency, and shorten the life of very expensive gear.

At the same time, 34% of operators say their current cooling is inadequate, even as most facilities still rely on air to remove the majority of heat. The industry’s response reflects this pressure: To support higher density, leaders are prioritizing improving airflow (51%), adopting new sensors for visibility (38%), and accelerating liquid adoption (implemented by 19%, with 36% planning deployments in the next 12 to 24 months).

Why did we hit the wall so fast?

Three forces arrived almost at once:

- A step-change in rack power from AI/HPC clusters (not linear growth, but a jump);

- Cloud repatriation and edge build-outs pushing more workload back into colocation (colo) and enterprise rooms;

- Supply-chain and power constraints that delayed full-scale retrofits, forcing legacy air systems to “stretch” beyond their design intent. In practical terms, that means more hotspots, filter differentials rising sooner than planned, and airflow paths that no longer match the way GPU trays ingest and exhaust air.

New requirements are emerging for AI-era airflow systems. Beyond hot/cold aisle discipline, operators are specifying:

- Measured air quality across white space (particulate, differential pressure, and gaseous contaminants) tied into DCIM/BCMS alarms, not just facility-level PM counts.

- Airflow visualization and sensing at the rack/row level to validate containment, prevent short-circuiting, and tune fan curves.

- Filter strategy modernization (e.g., higher-performance media with lifecycle tracking) to maintain low ΔP as densities rise.

- Hybrid thermal designs that treat air as a managed resource alongside liquid (e.g., rear-door heat exchangers and direct-to-chip), so the remaining air loop stays clean, laminar, and efficient.

Bottom line: Even as liquid expands, what your servers breathe still determines performance, uptime, and total cost. The new standard is continuous, data-driven airflow — intelligence, designing, measuring, and maintaining the air itself.

As power, density, and environmental expectations continue to rise, air is no longer a passive element; it’s an active design constraint. The modern facility must now treat airflow the way it treats power distribution or cooling topology: as a living, monitored system that determines the health of every server within it.

This is where the story begins: the very way servers breathe has changed forever.

Download the full report, The Hidden Cost of Dirty Air: How Contamination Threatens AI and HPC Data Centers, featuring Promera, to learn more. In our next article, we’ll examine how servers breath in the age of AI.

About the Author