The Acceleration Effect: When Data Center Evolution Outpaces Design

We continue our article series on the evolution of data center airflow management. This week, we’ll explore the operational consequences — what happens when the air servers breathe is not as clean, stable, or controlled as it needs to be?

By the early 2000s, data center airflow was a solved equation, or so it seemed. Raised floors, CRAC units, and perforated tiles delivered predictable results for predictable workloads. Five-kilowatt racks were considered dense, and “good airflow” meant little more than maintaining a balanced hot- and cold-aisle configuration. For two decades, that model worked.

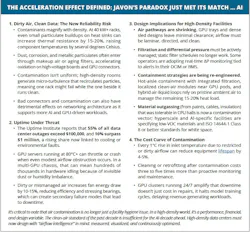

Then came GPUs, high-density compute trays, and AI workloads that inhaled power and air at unprecedented rates. The same airflow strategies that once stabilized data halls now expose their limitations. As rack power climbs past 40, 60, and even 100 kW, the velocity and turbulence of air change drastically, creating micro-pockets of contamination and recirculation that legacy containment systems were never designed to handle.

The result is not just thermal inefficiency, it’s environmental instability. Tiny particulates, corrosion byproducts, and unfiltered debris accumulate on heat sinks and boards, forming an insulating layer that silently erodes performance. It is key to understand that even minor contamination can increase fan speeds by 10-15%, raise inlet temperatures several degrees, and shorten component life cycles.

In GPU-dense clusters, where a single rack may host hundreds of thousands of dollars in hardware, the cost of dirty air is no longer trivial; it’s existential.

While liquid and hybrid systems are gaining traction, more than 80 percent of the world’s data centers still rely primarily on air to remove heat. That air is no longer a neutral medium; it’s a design variable that must be measured, filtered, and managed as precisely as power distribution or network latency.

The question facing operators today is not simply whether air cooling can keep up; it’s whether the air itself is clean and stable enough to sustain the next generation of compute.

Air Under Pressure: Contamination, Outages and the New Rules of Density

The industry is expanding faster than at any point in its history. What once felt like steady growth now feels like compression. Density is rising. AI adoption is accelerating. Infrastructure is being stretched in ways few facilities were originally designed to handle.

According to the latest AFCOM State of the Data Center research, average rack density has climbed to 27 kW, and 69% of operators expect it to rise even further. At the same time, 39% report that their current cooling systems are already inadequate to meet growing demand.

That statistic alone should give every operator pause.

Because even as the industry explores liquid cooling and hybrid solutions, 64% of data center heat is still managed by air. Air remains the dominant thermal medium. Yet the conditions under which that air must operate have fundamentally changed.

Cooling and Airflow at a Crossroads:

Liquid cooling technologies are gaining momentum, particularly in AI and HPC environments. But adoption is not frictionless. AFCOM data shows that 31% of operators cite integration complexity as a barrier, another 31% cite cost, and 21% point to skills shortages as limiting factors.

The result is a transitional environment. Many facilities are layering liquid cooling into legacy air-cooled architectures. Hybrid designs are becoming the norm.

But here is the overlooked reality. Even in liquid-assisted environments, air still plays a critical role. It removes residual heat, supports surrounding infrastructure, and maintains room-level environmental stability. It continues to move through plenums, across racks, and around containment systems.

When density increases, airflow velocity increases. When airflow velocity increases, particulate movement increases. When particulate movement increases, contamination risk rises.

Airflow has become more aggressive. The margin for environmental imperfection has narrowed.

Supply Chain and Construction Pressure:

Compounding the technical challenge is a strained delivery environment. Sixty-six percent of data center projects have experienced supply chain disruptions, and 15% of those disruptions have contributed to outage events.

Compressed construction schedules and component delays can create scenarios where environmental standards can be compromised. Temporary filtration, incomplete containment tuning, or deferred maintenance can introduce particulate exposure during critical commissioning windows.

As density increases, tolerance for environmental drift decreases.

Pre-commissioning contamination control, filtration validation, and airflow verification are becoming essential risk mitigation steps rather than optional best practices.

AI Acceleration:

AI deployment is no longer hypothetical. Seventy-four percent of operators are actively deploying AI-capable systems, and 72% predict AI will significantly increase their capacity requirements.

- This acceleration creates a feedback loop:

- More AI requires more density.

- More density requires more cooling.

- More cooling relies on more airflow.

- More airflow increases contamination sensitivity.

The infrastructure stack is under synchronized pressure.

Power and Sustainability Challenges:

Thermal stress does not exist in isolation from power strategy. Sixty-six percent of operators are exploring on-site generation, while 25% already cite nuclear as a key clean energy source.

Power availability is becoming strategic. Sustainability mandates are tightening. Energy costs are rising.

When airflow inefficiencies increase fan energy draw or reduce cooling system effectiveness, they amplify both cost and sustainability pressures. Dirty air becomes a hidden contributor to higher PUE and elevated operational expense.

In an environment where every kilowatt matters, wasted airflow efficiency is no longer acceptable.

Now, let’s put all of this together and understand one key point.

The Compounding Effect of Contamination

As rack densities climb, contamination is no longer a slow-moving maintenance issue. It becomes a multiplier on instability.

At higher densities:

- Intake air is drawn in at higher speeds.

- Heat sinks accumulate dust more rapidly.

- Fans ramp more frequently and operate at higher RPM.

- Thermal thresholds tighten.

Dust accumulation increases thermal resistance. Elevated inlet temperatures drive fan energy consumption higher. Localized hotspots trigger throttling events. In GPU-dense clusters, this can cascade across nodes performing synchronized AI training workloads.

The economic implications are significant. Modern AI racks are very expensive. Downtime events routinely exceed six figures in direct financial impact. Contamination-related airflow inefficiencies may not cause immediate failure, but they quietly erode performance margins until instability emerges.

Air quality is no longer cosmetic. It is operational insurance.

The data is clear. Density is rising. AI adoption is accelerating. Air remains the dominant cooling medium in most facilities. And yet the environmental conditions surrounding that air are becoming more volatile, more sensitive, and more consequential.

The industry cannot outgrow this challenge by adding capacity alone. More CRAC units, larger chillers, or incremental retrofits will not solve a fundamentally changing airflow equation. When contamination risk increases, when thermal margins narrow, and when GPU clusters operate at sustained peak utilization, reactive maintenance is no longer sufficient.

What is required now is intentional airflow engineering. Not just cooling systems, but clean air systems. Not just filtration, but measurable contamination control. Not just containment, but visibility into how air actually moves through high-density environments.

Download the full report, The Hidden Cost of Dirty Air: How Contamination Threatens AI and HPC Data Centers, featuring Promera, to learn more. In our next article, we’ll outline the path forward and why the real competitive advantage lies in designing facilities that do not just move air but understand it.

About the Author