Microsoft Builds for Two Worlds: Sovereign Cloud and AI Factories

Key Highlights

- Microsoft’s record $37.5 billion quarterly capex underscores its commitment to building large-scale AI and cloud infrastructure, with significant investment in compute chips and data center campuses.

- The company is strategically repurposing capacity in Texas and Norway, taking over projects initially linked to OpenAI, to ensure premium AI capacity remains within its control despite diversified sourcing.

- Microsoft’s Fairwater AI superfactory concept introduces a new data center architecture designed for coordinated, large-scale AI model training across multiple sites with advanced cooling and networking.

- Power density and sustainability are central to Microsoft’s infrastructure plans, exploring high-temperature superconducting power lines and community-first initiatives to address political and environmental concerns.

- International expansion includes new sovereign cloud regions in Denmark and Saudi Arabia, emphasizing regional compliance, data sovereignty, and localized AI services, complementing its global infrastructure portfolio.

So far in 2026, across the United States and overseas, Microsoft is building an infrastructure portfolio at full hyperscale. The strategy runs on two tracks.

The first is familiar: sovereign cloud expansion involving new regions, local data residency, and compliance-driven enterprise infrastructure.

The second is larger and more consequential: purpose-built AI factory campuses designed for dense GPU clusters, liquid cooling, private fiber, and power acquisition at a scale that extends far beyond traditional cloud infrastructure.

Despite reports last year that Microsoft was pulling back on data center development, the company is accelerating. It is not only advancing its own large-scale campuses, but also absorbing premium AI capacity originally aligned with OpenAI. In Texas and Norway, projects tied to OpenAI’s infrastructure plans have shifted back into Microsoft’s orbit.

Even after contractual changes gave OpenAI greater flexibility to source compute elsewhere, Microsoft remains the market’s most reliable backstop buyer for top-tier AI infrastructure. It no longer needs to control every OpenAI build to maintain its position.

In 2026, Microsoft is still the company best positioned to turn uncertain AI demand into deployed capacity, e.g. concrete, steel, power, and silicon at scale.

Building at Industrial Scale

The clearest indicator of Microsoft’s intent is its capital spending. In its January 2026 earnings cycle, Reuters reported that Microsoft’s quarterly capital expenditures reached a record $37.5 billion, up nearly 66% year over year. The company’s cloud backlog rose to $625 billion, with roughly 45% of remaining performance obligations tied to OpenAI. About two-thirds of that quarterly capex was directed toward compute chips.

To be clear: this is no speculative buildout. Microsoft is deploying capital against a massive, committed demand pipeline, even as it maintains significant exposure to OpenAI-driven workloads. The company is solving two infrastructure problems at once: supporting broad Azure and Copilot growth, while ensuring enough frontier-scale capacity to train and run the next generation of foundation models.

The move transcends any cyclical phase of cloud expansion. Microsoft is turning AI infrastructure into a core corporate competency; spanning siting, power acquisition, cooling, networking, and geopolitical placement.

After OpenAI: A More Flexible Advantage

In January 2025, Microsoft loosened its exclusive hold on new OpenAI infrastructure, giving OpenAI more freedom to source capacity elsewhere. That shift created space for OpenAI’s work with Oracle and the Stargate initiative.

But the February 2026 Microsoft–OpenAI joint statement clarified the new balance. Microsoft Azure remains central. It is still the exclusive cloud provider for stateless OpenAI APIs, and OpenAI’s first-party products continue to run on Azure, even as the company expands its infrastructure footprint beyond Microsoft.

Microsoft no longer needs to control every OpenAI build to remain structurally advantaged. It now benefits across multiple channels inclluding Azure-hosted OpenAI services, its own first-party AI demand, and its ability to capture high-end capacity when plans shift.

That last dynamic is already playing out.

Texas: Microsoft Steps Into Stargate-Adjacent Capacity

The clearest example is Abilene, Texas. In March 2026, Microsoft agreed to lease a roughly 700-megawatt data center project originally developed for Oracle and OpenAI. The site sits adjacent to the flagship Stargate campus. Microsoft struck the deal with Crusoe after Oracle and OpenAI stepped away from plans to occupy the expansion.

OpenAI’s existing agreements with Oracle remain in place. Microsoft is not replacing the core Stargate deployment. Rather, it is taking over adjacent capacity that Oracle and OpenAI chose not to pursue.

Less a breakdown in the OpenAI–Oracle relationship, this move is a signal of how fluid AI campus planning has become at this scale. Financing terms shift. Power delivery timelines move. Model roadmaps evolve. Demand can outpace development schedules.

Microsoft’s advantage is straightforward: when premium AI capacity becomes available, it has the demand to fill it.

For developers, the message is clear. Microsoft is not just a pre-committed tenant. It is an opportunistic buyer of high-quality AI capacity when project dynamics change.

Norway: Reinforcing the Pattern

The Norway deal confirms this is not a one-off.

Microsoft has agreed to lease data center capacity in Narvik, Norway, at a campus originally aligned with OpenAI and marketed as part of Stargate. At the Arctic Circle site, Microsoft will deploy 30,000 additional Nvidia Vera Rubin chips through an agreement with Nscale, building on a prior $6.2 billion commitment in the region.

Narvik shows Microsoft doing more than backfilling unused capacity. It is consolidating access to premium, GPU-dense AI infrastructure in a location that also offers advantages in climate, renewable energy positioning, and European market access.

Together, Texas and Norway apparently point to a similar conclusion. Even as OpenAI diversifies its infrastructure sourcing, Microsoft remains at the center of the AI infrastructure market. It captures demand on Azure, and it captures value when alternative plans leave high-quality capacity available.

The Rise of the AI Superfactory

In September 2025, Microsoft said it was in the final phases of building its Fairwater campus in Mount Pleasant, Wisconsin, with initial operations expected in early 2026. The company committed $3.3 billion to the first phase and announced an additional $4 billion for a second facility, pushing its total investment in the region beyond $7 billion. The campus is designed to house hundreds of thousands of Nvidia GPUs and is explicitly positioned as a frontier-model training complex.

But Fairwater is more than a single site. It is a blueprint.

In November 2025, Microsoft outlined its “AI superfactory” concept: a distributed architecture linking multiple large-scale campuses through a dedicated AI WAN. The company said a Fairwater-designed site in Atlanta began operating in October 2025, and that these campuses are engineered to function as a unified system: a virtual supercomputer spanning multiple geographies.

The design reflects a new class of infrastructure. Microsoft describes two-story buildings, GB200 NVL72 rack-scale systems, advanced liquid cooling, and tightly integrated networking, all optimized to keep hundreds of thousands of GPUs operating as a single coordinated system.

This marks a fundamental shift in data center design. Traditional hyperscale campuses were built to support massive volumes of independent workloads. Microsoft’s AI superfactory model is built to run one coordinated workload across millions of components at once.

Power Is Still the Constraint

In February, the Microsoft Azure Blog reported that the company is exploring high-temperature superconducting power lines inside data centers to increase electrical density without expanding the physical footprint of transmission infrastructure. The goal is clear: scale power delivery while reducing substation requirements and limiting community impact.

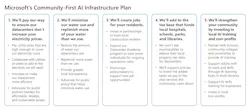

That aligns with Microsoft’s January 2026 “community-first” initiative. The company pledged to pay utility rates that fully cover the cost of its power demand, rather than shifting those costs to local ratepayers. It also committed to replenishing more water than its U.S. data centers consume and publishing region-level water data.

The move is a direct response to rising political scrutiny over utility pricing, water use, and local opposition tied to AI-driven data center growth. For hyperscale developers, this is no longer a secondary concern. Political permission now sits alongside interconnection as a core requirement for building at scale.

For Microsoft, political permission now rivals interconnection as a gating factor for development. Community opposition has already delayed or derailed projects across the industry.

The company’s response is to embed “responsible growth” into the buildout model: absorb infrastructure costs, address water use directly, increase transparency, and engage early on local economic impact.

Whether that approach will consistently secure approval remains an open question.

Cheyenne and the Land-Bank Logic of AI Infrastructure

On April 14, Microsoft announced plans to acquire roughly 3,200 acres in Cheyenne for future data center development. The site includes a 200-acre parcel in Bison Business Park and an additional 3,000 acres in southeast Cheyenne. The project is expected to unfold over multiple years, with public hearings and ongoing community engagement.

Cheyenne is already an established Microsoft market. What matters here is how the company is treating land. In the AI era, land is no longer just a future building site. It is optionality: on power, cooling, local politics, and network topology.

As Bowen Wallace, Corporate Vice President of Datacenters for the Americas Region at Microsoft, put it:

Since the development of our first data center in 2012, Microsoft has been working to strengthen, not strain, the community of Cheyenne. We’re excited to continue our growth in the state bringing more investment, opportunity and tax revenue to the community we’ve been a part of for more than 14 years.

Global Expansion, Different Mission

While U.S. AI factory development draws the spotlight, Microsoft’s international buildout in 2026 remains substantial. In March, the company opened its Denmark East cloud region, spanning campuses in Høje Taastrup, Køge, and Roskilde, positioned around digital resilience, low-latency access, and local data handling. In Saudi Arabia, its Saudi Arabia East region in the Eastern Province is scheduled to come online in the fourth quarter of 2026 with three availability zones.

These are not quite "pure" AI factories. They serve a different purpose: sovereignty, compliance, enterprise cloud modernization, and localized AI services.

That distinction is the strategy. Microsoft is expanding both cloud and AI infrastructure, but optimizing each for a different role.

This dual-track approach is a structural advantage over competitors focused primarily on either enterprise cloud or frontier-scale AI training capacity.

What This Means for the Industry

For developers, utilities, investors, and colocation providers, the message seems to be that Microsoft is turning data center construction into a system-level competency.

Its spending anchors large campuses. Its cloud platform provides a monetization layer beyond any single tenant. Its OpenAI relationship sustains exposure to frontier-model demand. And its willingness to absorb reallocated capacity shows it can move faster than competitors when premium infrastructure becomes available.

Even as OpenAI expands its options, Oracle pushes aggressively into AI infrastructure, and Stargate builds its own gravitational pull, Microsoft retains the balance sheet, demand base, and platform leverage to capture strategic capacity as it emerges.

The company is assembling a hybrid infrastructure portfolio: sovereign cloud regions and AI factories, linked by private networking, shaped by power constraints, and increasingly justified through community economics. From Wisconsin training campuses to Atlanta-linked superfactory design, Cheyenne land banking, Arctic Circle GPU deployments, and Stargate-adjacent expansion in Texas, each project serves a distinct role. Together, they form a coherent system.

Microsoft no longer needs to control the entire OpenAI ecosystem to dominate the infrastructure layer of AI. It only needs to be the company best positioned to turn uncertain demand into operational capacity: faster, and at greater scale, than anyone else.

At Data Center Frontier, we talk the industry talk and walk the industry walk. In that spirit, DCF Staff members may occasionally use AI tools to assist with content. Elements of this article were created with help from OpenAI's GPT5.

Keep pace with the fast-moving world of data centers and cloud computing by connecting with Data Center Frontier on LinkedIn, following us on X/Twitter and Facebook, as well as on BlueSky, and signing up for our weekly newsletters using the form below.

About the Author