Designing Data Centers for the AI Age

This launches our article series on how data center leaders are rethinking cooling strategies, embracing modularity, and preparing their facilities to support AI at scale with confidence and resilience.

If you’ve turned on the news lately, even mainstream media, you’ll notice something very evident about the digital infrastructure: The data center industry has reached a defining moment.

Today, the data center industry is confronting a structural transformation driven by artificial intelligence and high-performance computing workloads that far exceed the operational profiles of legacy enterprise systems. What was once a gradual progression of incremental improvements has become an accelerated shift in how facilities must be designed, powered, and cooled. Traditional data centers optimized around air-cooled environments and conservative power envelopes are now strained by workloads that demand dramatically higher power densities and thermal management capacity.

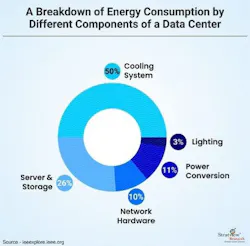

Industry data confirms this acceleration. According to the AFCOM State of the Data Center report, average rack power density has climbed significantly in recent years, rising from roughly 7 kW per rack in 2021 to around 27 kW today, with nearly 80 percent of industry professionals expecting further increases due to AI and other high-performance workloads. Within the facility itself, cooling now represents roughly half of total energy use, up from 43 percent a decade ago. And still, demand continues to climb.

This trend reflects an environment where rack-level demands are consistently outpacing traditional cooling and power design assumptions.

At the same time, global power demand from data centers is projected to grow sharply. Independent forecasts estimate that worldwide electricity consumption for data centers could nearly double by 2030, reaching levels that place significant stress on electrical infrastructure and grid capacity.

As data centers scale to support AI workloads, they increasingly account for a larger share of overall energy use, driving urgency around operational efficiency and infrastructure strategy.

The implications are clear: data centers can no longer rely solely on conventional cooling approaches.

As rack densities rise and power consumption surges, air-based thermal management is approaching its practical limits. This shift requires a fundamental rethinking of cooling architectures, power distribution models, and facility design.

Let’s dive in and explore how these trends have converged to redefine what constitutes a “cool” data center in the AI era and why traditional assumptions about power, cooling, and rack design are being replaced with new metrics and priorities.

Physics: The Limits of Air and Heat Dissipation

For most of the data center industry’s history, air has been the silent workhorse of thermal management. It scaled reliably alongside enterprise workloads, absorbed incremental increases in rack density, and became an assumed constant in facility design. That assumption no longer holds. The rapid rise of artificial intelligence has revealed the limits of air-based cooling faster than many expected, exposing constraints rooted not in engineering preference, but in physics.

According to the latest AFCOM State of the Data Center 2026 report, today’s GPU-dense racks are operating at an average of roughly 27 kW per rack, with the majority of operators anticipating continued growth. These are not incremental increases. They represent a fundamental shift in heat concentration, airflow demand, and component sensitivity. As power density rises, airflow that once appeared sufficient now struggles to maintain stability. Higher heat flux, tighter server layouts, and more thermally sensitive silicon amplify turbulence and recirculation, increasing inlet temperatures and degrading heat-sink performance. The consequence is not theoretical. It directly impacts the reliability, efficiency, and lifespan of hardware that carries a far higher capital cost than traditional IT equipment.

Industry Data Underscores Strain

More than one-third (39%) of operators report that their existing cooling infrastructure is already inadequate, even though most facilities still rely primarily on air to remove heat. The industry’s response reflects this pressure. Operators are investing in improved airflow management, expanding sensor coverage to gain visibility into thermal behavior, and accelerating the adoption of liquid cooling. While liquid deployments remain in early stages for many, planned implementations over the next 12 to 24 months signal a clear and accelerating shift.

The speed of this inflection point is not coincidental. Multiple forces converged almost simultaneously. AI and HPC clusters introduced a step-change in rack power rather than a gradual climb. Cloud repatriation and edge expansion pushed dense workloads back into colocation and enterprise environments. At the same time, power availability and supply chain constraints slowed large-scale retrofits, forcing legacy air systems to operate well beyond their original design assumptions. In practice, this manifests as more frequent hotspots, filters reaching pressure limits sooner, and airflow paths misaligned with modern GPU intake and exhaust patterns.

As a result, new requirements are emerging for airflow management in AI-era data centers. Operators are moving beyond basic hot- and cold-aisle discipline toward continuous, data-driven airflow intelligence that treats air as a critical, managed system rather than a background condition. These emerging requirements include:

- Granular airflow visibility at the rack and row level

Real-time sensing and visualization to validate containment, detect short-circuiting, and tune fan behavior as densities and thermal loads fluctuate.

- Hybrid thermal architectures that intentionally balance air and liquid cooling

Designs that deploy liquid where it delivers the most value. Such as rear-door heat exchangers or direct-to-chip cooling, while preserving a clean, stable, and efficient air loop for remaining heat removal.

- Operational models built for continuous adjustment, not static design

Processes that recognize airflow paths, cleanliness, and pressure relationships as dynamic variables that must be measured, tuned, and maintained over time.

In this new reality, air is no longer a passive element of data center design. It is an active constraint that directly influences performance, reliability, and total cost of ownership. The modern facility must manage airflow with the same rigor applied to power distribution and cooling topology. This shift marks the starting point of the AI data center journey, where the way servers breathe becomes a defining factor in how successfully infrastructure scales into the future.

This is where the story of the AI data center begins:

Not with higher power targets or incremental cooling upgrades, but with a fundamental shift in how servers take in, move, and reject heat; and how every aspect of the facility must evolve to support that new reality.

The AI Moment: Why Data Center Cooling Has Changed Forever

Only a few years ago, rack power densities were modest and predictable. Most enterprise environments operated comfortably below 5 kW per rack, and even large-scale deployments were designed around conservative thermal assumptions. Airflow was abundant, margins were wide, and cooling strategies evolved slowly alongside incremental improvements in compute performance.

That equilibrium no longer exists. Average rack densities have climbed into the low to mid-teens, and forward-looking deployments are accelerating well beyond that baseline. AI and HPC clusters are now driving rack power past 30 kW, with many environments planning for 50 kW and higher as GPU density continues to increase. In purpose-built AI facilities, densities approaching or exceeding 100 kW per rack are no longer theoretical. They are actively shaping design decisions today.

This shift is not the result of a single trend. It reflects the convergence of GPU-intensive AI training and inference, high-density HPC architectures, and the return of critical workloads from public cloud platforms into enterprise and colocation facilities. Together, these forces have created a clear divergence in thermal reality. While many traditional data centers still operate near 12 to 15 kW per rack, hyperscale and AI-focused environments are already running at more than double that level.

Air was once the quiet constant of data center design. Today, it is being pushed to the limits of its physical capability. The way servers draw in, move, and reject air has fundamentally changed. As a result, airflow is no longer a background assumption. It has become a primary design constraint that will determine how successfully data centers scale to support AI-driven workloads.

The Thermal Equation in the AI ERA

The data center industry is not simply scaling. It is transforming. For more than two decades, facilities were designed around traditional enterprise workloads such as email, databases, and virtualized compute. These systems grew steadily, distributed power relatively evenly, and produced thermal profiles that air cooling could manage with incremental tuning. Cooling strategies matured slowly because the workloads themselves behaved predictably.

Artificial intelligence has broken that model.

AI and high-performance computing workloads concentrate massive amounts of compute into increasingly small physical footprints. Power draw is no longer smooth or evenly distributed. It is dense, localized, and highly dynamic. According to the latest AFCOM State of the Data Center research, average rack densities continue to rise sharply, with operators reporting sustained pressure on both power and cooling capacity as AI adoption accelerates across enterprise, colocation, and hyperscale environments.

From Enterprise IT to AI Infrastructure

The difference between legacy servers and modern AI systems is structural, not incremental. AI platforms introduce a new thermal and electrical reality driven by several factors:

- Thermal density: GPUs generate significantly higher heat flux per unit area than traditional CPUs.

- Power concentration: AI systems draw power at the rack and chassis level rather than distributing load across the room.

- Environmental sensitivity: Modern accelerators are more sensitive to airflow stability, pressure balance, and contamination.

Real-world deployments now reflect this shift. Operators are already running AI racks in the 70 to 75 kW range using rear-door heat exchangers and liquid-assisted cooling architectures. These are not pilot experiments. They are production environments supporting revenue-generating workloads.

A recent partnership between nVent, Siemens, and NVIDIA saw the development of the 100 MW Hyperscale AI Blueprint. That document illustrates where this trajectory is heading. The reference architecture, which we’ll discuss more in Section 4, is designed around NVIDIA GB200 NVL72 systems rated at approximately 127 kW per rack, with more than 80 percent of rack heat removed via direct-to-chip liquid cooling and the remainder handled by a tightly controlled air loop.

This design reflects a future where air alone is no longer capable of carrying the thermal load. On that note, let’s briefly look at why legacy isn’t keeping up.

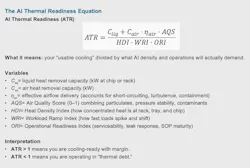

Why Legacy Metrics are Failing

For years, the industry relied on simple planning metrics such as watts per square foot and kilowatts per rack. Those metrics assumed a relatively uniform load distribution and predictable airflow behavior. AI workloads invalidate those assumptions.

Within the next 36 months, these traditional metrics will become increasingly misleading. What matters now includes:

- Peak rack power rather than room averages

- Heat concentration at the chip and tray level

- The interaction between liquid-cooled racks and residual air cooling

- The operational impact of airflow quality on high-value hardware

In the AI Blueprint reference design, cooling and power are planned at the pod level, with each pod supporting over 2.2 MW of IT load across tightly integrated power and cooling systems.

This approach highlights a broader industry shift away from monolithic halls toward modular, high-density building blocks that can scale rapidly without destabilizing the facility.

Liquid Cooling Returns to the Mainstream and is Cool Again

Liquid cooling is not new, but its role has changed.

Once confined to niche HPC environments, it is now a foundational requirement for AI-ready data centers. AFCOM data shows a growing percentage of operators actively planning or deploying liquid cooling to address density and efficiency constraints.

The Blueprint reinforces this trend by treating liquid cooling as the primary thermal pathway, not an enhancement. In the reference architecture:

- Each AI rack receives dedicated liquid cooling

- Modular CDUs deliver over 6 MW of liquid IT heat removal per pod

- Air handles only the remaining residual load, typically 20 to 25 kW per rack, under tightly managed conditions

What’s important to note is that air flow and air management aren’t going away. The shift we’re seeing does not eliminate air. It redefines its role. Air becomes a precision-managed system that supports stability, cleanliness, and resilience rather than carrying the full thermal burden.

A New Thermal Equation

Taken together, these trends signal a permanent change. Cooling in the AI era is no longer about incremental optimization. It requires rethinking the thermal equation entirely.

AI-ready facilities must be designed for:

- Rapid increases in density that outpace traditional retrofit cycles

- Modular architectures that isolate risk and enable fast deployment

- Hybrid cooling systems that intentionally balance air and liquid

- Operational models that treat airflow quality as a reliability variable

This is the AI moment for data centers. The assumptions that shaped infrastructure for the last twenty years are colliding with workloads that behave differently, cost more when they fail, and scale faster than legacy designs allow.

The central question is no longer whether AI will change data center cooling.

It is whether your data center can evolve fast enough to support it, or whether it remains constrained by yesterday’s assumptions.

The new thermal equation is not theoretical. It reflects what operators are already encountering as AI workloads expose gaps between planned capacity and real-world performance.

Download the full report, Power, Cooling, and Bravery: Designing Data Centers for the AI Age, featuring nVent, to learn more. In our next article, we’ll examine where legacy infrastructure begins to break down and why an industry built for predictability is now being forced to operate at AI speed.

About the Author