Cooling’s New Reality: It’s Not Air vs. Liquid Anymore. It’s Architecture.

Key Highlights

- Chip-level innovations like HRL's Low-Chill cold plates aim to increase GPU cooling capacity while reducing pumping power, supporting hotter coolant loops and water-scarce environments.

- OEMs are acquiring liquid cooling IP and expanding manufacturing capacity to meet the rapid deployment and scaling needs of AI data centers, making proprietary thermal solutions a strategic differentiator.

- Reliability-focused chillers from Carrier and Modine demonstrate that robust heat rejection and quick recovery remain critical, especially under real-world operating extremes.

- Immersion cooling platforms, such as Infinium Edge, are positioning themselves as scalable, high-density solutions that unify chemistry, manufacturing, and deployment for next-generation AI infrastructure.

- The industry is integrating water management innovations, including wastewater-based cooling concepts, reflecting a broader shift towards sustainable and community-aware thermal solutions.

By early 2026, the data center cooling conversation has started to sound less like a product catalog and more like a systems engineering summit. The old framing - air cooling versus liquid cooling - still matters, but it increasingly misses the point. AI-era facilities are being defined by thermal constraints that run from chip-level cold plates to facility heat rejection, with critical decisions now shaped by pumping power, fluid selection, reliability under ambient extremes, water availability, and manufacturing throughput.

That full-stack shift is written all over a grab bag of recent cooling announcements. On one end of the spectrum we see a Department of Energy-funded breakthrough aimed directly at next-generation GPU heat flux. On the other, it's OEM product launches built to withstand –20°F to 140°F operating conditions and recover full cooling capacity within minutes of a power interruption. In between we find a major acquisition move for advanced liquid cooling IP, a manufacturing expansion that more than doubles footprint, and the quiet rise of refrigerants and heat-transfer fluids as design-level considerations.

What’s emerging is a new reality. Cooling is becoming one of the primary constraints on AI deployment technically, economically, and geographically. The winners will be the players that can integrate the whole stack and scale it.

1) The Chip-level Arms Race: Single-phase Fights for More Runway

The most “pure engineering” signal in this news batch comes from HRL Laboratories, which on Feb. 24, 2026 unveiled details of a single-phase direct liquid cooling approach called Low-Chill™. HRL’s framing is pointed: the industry wants higher GPU and rack power densities, but many operators are wary of the cost and operational complexity of two-phase cooling.

HRL says Low-Chill was developed under the U.S. Department of Energy’s ARPA-E COOLERCHIPS program, and claims a leap that goes straight at the bottleneck. It can increase processor cooling capability by 40% or reduce pumping power by more than 10X. That pumping-power claim is not a footnote. In AI-era liquid-cooled designs, it’s increasingly part of the economic and architectural equation.

“We designed this technology with real data center constraints in mind,” said Christopher Roper, principal investigator at HRL.

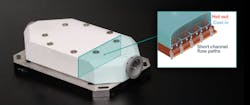

At the core is a cooling block architecture that uses an engineered 3D-printed manifold to distribute coolant through hundreds of short flow paths directly over the processor, addressing fundamental issues of conventional designs such as long channels, friction losses, and uneven coolant delivery. HRL’s disclosed metrics include:

-

Thermal interface resistance: 8.2 °C/kW

-

Pressure drop: below 1 psi per cooling block

-

Pumping power: less than 1% of rack IT power (block-level)

-

Hot-loop capability: coolant inlet temperatures up to 70°C

The company also claims that the approach can remove 40% more heat load compared to state-of-the-art cooling blocks under equivalent pumping power, supports heat flux up to 400 W/cm², and is scalable to higher powers. HRL even ties the performance envelope to future GPU roadmaps, stating the approach can help meet NVIDIA’s anticipated Rubin and Feynman GPU cooling needs.

Crucially, HRL’s “why it matters” pitch extends beyond silicon. By enabling ultra-low thermal resistance at the processor level, the approach can support hotter coolant loops, which in turn can make dry air coolers more viable, reducing reliance on evaporative systems and improving compatibility with water-constrained climates.

“As a private company owned jointly by Boeing and GM,” HRL positioned the work as deployable and partner-ready, while explicitly stating it is seeking development partners.

The takeaway? Single-phase liquid cooling isn’t done evolving. If the performance claims hold up in broader deployments, the “single-phase vs. two-phase” decision may be less about theoretical limits and more about how far innovations like manifold geometry can push practical limits.

2) OEMs Aren’t Just Shipping Chillers, They’re Buying Liquid Cooling IP

If HRL is about the physics, Johnson Controls’ news is about strategy. On Feb. 18, 2026, the company said it had signed an agreement to acquire Alloy Enterprises, a Boston-based firm founded in 2020 focused on a proprietary thermal management platform for high-performance data centers and other mission-critical industrial applications.

Johnson Controls says Alloy’s advanced direct liquid cooling components can enable:

-

up to 35% improvement in thermal management efficiency

-

up to 75% reduction in pressure drop

Those numbers land squarely in the same territory HRL is emphasizing: cooling efficiency and pumping power, not just peak capacity.

“This acquisition is about enabling our customers to stay ahead of fast-changing compute demands by adding another core technology that enables us to optimize the overall thermal management architecture of a data center,” said Lei Schlitz, Vice President and President, Global Products & Solutions, for JCI. “It will also strengthen our core technology capabilities that can scale across the Johnson Controls portfolio and reinforces our long-term commitment to lead more broadly in advanced thermal management solutions for mission critical applications.”

The company also used the announcement to remind the market that it’s building a broad thermal toolbox, citing offerings including its:

-

YDAM magnetic bearing chiller delivering 3.5 MW of cooling and a 20% capacity density increase versus competing solutions.

-

YK-HT two-stage economized centrifugal chiller, almost 30% smaller than alternatives and requiring up to 60% fewer dry coolers.

-

Silent-Aire Coolant Distribution Unit (CDU) platform with cooling capacities from 500 kW to over 10 MW.

-

YHAU absorption chillers, designed to recover waste heat and deliver additional cooling more than 90% more efficiently than electrical cooling.

Alloy’s CEO Alison Forsyth framed the deal as a scaling moment:

“We’re excited to join Johnson Controls and accelerate the impact of our unique technology… We look forward to this new chapter and continuing to scale with one of the world’s most respected and experienced leaders in thermal management innovation.”

The transaction is expected to close in fiscal Q3, subject to regulatory approvals and closing conditions. Financial terms were not disclosed.

The bigger signal is to discern how cooling IP, especially for liquid cooling component architecture, is now strategic enough to acquire. That puts liquid cooling on the same playing field as power distribution and electrical gear as a domain where differentiation is increasingly tied to proprietary capability, not just integrator packaging.

3) Chillers Re-Examined for Reliability in the Real World

At the facility layer, two announcements make an unambiguous case that regardless of how “hot” future coolant loops get, real-world conditions still demand robust heat rejection and fast recovery.

Carrier’s AquaEdge 30CF: “Perform When it Matters Most”

On Feb. 26, 2026, Carrier introduced the AquaEdge® 30CF air-cooled centrifugal chiller, designed to help operators maintain continuous performance and protect uptime “under real-world operating conditions.” Carrier positioned the product as part of Carrier QuantumLeap™, its portfolio of integrated thermal management solutions for data centers.

“As data centers evolve, operators need confidence that their cooling systems will perform when it matters most,” said Christian Senu, Vice President, Data Centers, Carrier. “The AquaEdge® 30CF was engineered with our customers in mind to protect uptime through reliable operation across a range of ambient conditions and respond quickly if the unexpected occurs.”

Carrier’s key differentiators are very specific:

-

Operating range: –20°F to 140°F

-

Recovery: restore 100% cooling capacity in under three minutes after a power interruption

-

Capacity: more than 3 MW of cooling (depending on ambient conditions)

-

Architecture: proprietary two-stage, back-to-back centrifugal compressor with magnetic bearing technology

-

Oil-free platform derived from the AquaEdge® 19MV water-cooled centrifugal chiller

Carrier also emphasized its “expanded global chiller manufacturing capacity” as a lever to reduce deployment and supply chain risk, an increasingly important angle as campuses attempt to replicate deployments across multiple markets simultaneously.

Modine / Airedale TurboChill 3+MW: the hybrid case

Meanwhile, on Jan. 22, 2026, Airedale by Modine™ announced the TurboChill™ 3+MW, calling it a hybrid chiller engineered to manage rising AI workloads by maximizing free cooling when conditions allow while still deploying mechanical cooling for peaks and reliability.

Modine’s Art Laszlo, Group Vice President of Global Data Centers, directly addressed the industry speculation that chillers may become unnecessary as chips tolerate higher temperatures.

“There is speculation that chillers may no longer be required as next-generation chips are designed to operate at higher temperatures,” Laszlo said. “However, customers continue to demand proven cooling and reliability to protect their investments… Thermal architectures with dry coolers as the only form of heat rejection are not practical in many regions where varying ambient and recirculation conditions will still require refrigerant-based cooling for reliable data center operations.”

Modine’s “why hybrid” argument includes:

-

heat waves and ambient extremes

-

parasitic system losses and local recirculation

-

mixed-density facilities (some racks hot-loop, others still needing traditional returns)

-

global deployments across climates where “dry only” is insufficient

Taken together, Carrier and Modine are arguing that the future is not “chiller-less.” It’s more conditional, as in more free cooling where possible, more liquid at the rack, but with refrigerant-based capacity still serving as the reliability backstop.

4) Immersion Keeps Pressing its Case, Now with “Platform” Language

On Jan. 15, 2026, Infinium launched Infinium Edge™, described as an advanced infrastructure platform designed to enable high-density AI and HPC workloads through immersion cooling. The centerpiece is Infinium Edge Immersion Fluids™, custom-engineered dielectric fluids meant to remove heat “directly at the source.”

Infinium’s messaging was blunt. The company stated that "cooling has emerged as one of the primary constraints to data center performance, efficiency, siting and scale.”

“Cooling has become one of the defining constraints on deploying state-of-the-art AI compute systems,” said Robert Schuetzle, CEO of Infinium. “Infinium Edge leverages our expertise in advanced chemistry and industrial-scale manufacturing to deliver an immersion cooling platform that advances next-generation AI infrastructure.”

The company contrasted typical density bands:

-

Conventional air: ~10–20 kW/rack

-

Direct-to-chip: ~40–80 kW/rack

-

Immersion: higher still, positioned as a path beyond those limits

It also drew a line between its synthetic approach and “commodity” petroleum-derived immersion liquids, arguing that cleaner synthetic products avoid residual contaminants that can limit reliability and long-term performance.

The significance here is as much rhetorical as technical. Immersion is increasingly being sold not as a niche cooling method, but as a platform that unites chemistry + manufacturing + deployment model.

5) The Supply Chain Reality: Cooling is Also a Manufacturing Problem

If the AI era is compressing deployment schedules, then cooling has to scale in two dimensions: technology and throughput. On Feb. 17, 2026, Boyd announced a major expansion of its design and manufacturing facility in Juarez, Mexico to increase production for AI infrastructure, hyperscale, and colocation data centers.

Boyd said it is expanding the Juarez thermal campus to approximately 460,000 square feet, up from 217,000 square feet, more than doubling capacity. The company cited rising demand for liquid-cooled solutions, and positioned the site as a high-volume capability hub for:

-

CDUs ranging from 0.5 MW to 5 MW (with a roadmap to higher performance)

-

direct-to-chip cold plates

-

cooling loops

-

in-rack manifolds

“We anticipate AI data center customer needs and market dynamics allowing continuous alignment of our operations, manufacturing capacity, and technology investments for cooling up to 5 MW and beyond,” said David Huang, Boyd President, Thermal Solutions Division.

Boyd also emphasized on-site design, testing, and manufacturing engineering teams, supported by supply chain architecture and global vendor partnerships. This is language that mirrors what hyperscalers want to hear: not just capability, but repeatability and speed to market.

It all highlights the less glamorous truth of AI-era cooling. Even the best thermal designs don’t matter if the parts can’t be built and delivered at the pace campuses require.

6) AHR Expo 2026: The Chemistry Layer Becomes Visible

Several of the most revealing “quiet” signals in the data center cooling space came from the AHR Expo cycle in Las Vegas.

Arkema: Low-GWP refrigerants and data-center-targeted fluids

On Feb. 2, 2026, Arkema said it would showcase an expanded portfolio of lower Global Warming Potential refrigerants and thermal management solutions, explicitly highlighting the data center market.

The company spotlighted:

-

Forane® 454B, positioned as a replacement for R410A in comfort cooling, with lower GWP than Forane® 410A

-

A broader lower-GWP portfolio including Forane® 32, 448A, 449A, 452A, 513A, and HTS 1233zd

For data centers, Arkema highlighted:

-

Chillers: Forane® 513A and Forane® HTS 1233zd (low-GWP, non-flammable)

-

Direct-to-chip cooling: Forane® HTS 1233zd as a heat-transfer fluid

-

Building envelope: Forane® FBA 1233zd for foam insulation and roofing

“At AHR Expo, we’re demonstrating how Arkema’s refrigerants and thermal management technologies support the vast range of cooling applications, from HVAC to data center operations,” said Anthony O’Donovan, President and CEO of Arkema Inc. “Our portfolio reflects our long-term commitment to sustainability, performance, and partnership with customers navigating rapid industry change.”

PFX Group: fluids stop being “commodity”

On Jan. 27, 2026, PFX Group (Recochem and KOST USA) said it would showcase its SOLUTHERM™ heat transfer fluids at AHR, highlighting HVAC, mission-critical buildings, and data center liquid cooling.

Notable claims included:

-

SOLUTHERM™ Direct Liquid Cooling (DLC) solutions deployed in select Lenovo®, Intel®, and Dell® systems

-

Operating temperatures from -60°F/-51°C to 325°F/162°C

-

Corrosion protection across multiple metals and stability across extreme temperature ranges

-

LEED-supportive, bio-based product options, including corrosion testing standards (ASTM D8039)

“HVAC and data center cooling are no longer separate conversations,” said Jerome Dujoux, Vice President, Branding & Innovation. “Thermal management is becoming a connective tissue between digital infrastructure and the built environment.”

“Heat transfer fluids are often treated as a commodity when in reality, they influence energy efficiency, equipment lifespan and system reliability more than most people realize,” added Tom Corrigan, Director, R&D.

AHR’s major takeaway? The chemistry layer as comprised of refrigerants, heat-transfer fluids, insulation foams is increasingly being positioned as a performance and compliance differentiator, more than some background procurement detail.

7) The “Frontier” Idea: Cooling AI with Wastewater

Not every data center cooling news announcement in this roundup is ready for prime time, but some moonshots are worth noting as the industry hunts for water answers.

On Feb. 5, 2026, Waste2Nano LLC announced a proprietary “Wastewater-Cooled AI” platform, describing modular systems that integrate wastewater infrastructure with AI/data-center cooling, while “mining” wastewater solids into advanced materials.

Waste2Nano says its first planned deployment is at 10,000–20,000 m³/day (~5 MGD) capacity, designed to produce “a few tons per day” of MC/nanocellulose, with portable units engineered to integrate into existing wastewater treatment plant (WWTP) and data center infrastructure.

“Any advanced society will mine its waste,” said Dr. Refael Aharon, CEO of Waste2Nano. “Cooling AI with drinking water makes no sense.”

The concept blends three flows:

-

raw wastewater enabling cooling via closed-loop heat exchange

-

sewage solids as feedstock for materials

-

data center waste heat as process energy

Whether this becomes a scalable model or a niche experiment, it points to the direction of travel into a rapidly unfolding future where cooling solutions will be evaluated not just on thermals and capex, but on community acceptance and water politics.

The New Cooling Stack: Integration Wins

The common thread across these disparate news items is not a single technology. It’s the way data center cooling is being redefined as an integrated stack involving:

-

Chip-level innovation (HRL’s Low-Chill cold-plate architecture; immersion fluid chemistry).

-

Distribution and pumping power (pressure-drop reductions become strategic).

-

Facility heat rejection and resiliency (Carrier and Modine making the reliability case).

-

Chemistry and compliance (low-GWP refrigerants and purpose-built heat-transfer fluids).

-

Manufacturing throughput (Boyd scaling footprint; OEMs emphasizing capacity).

-

Water optionality (hot-loop architectures and frontier models like wastewater integration).

If there’s a single editorial conclusion to be drawn for 2026, it might be that cooling is no longer a subsystem you bolt on after the IT plan is set. It’s now one of the main determinants of whether the AI plan is feasible at all in terms of where it can be built, how fast it can scale, and what it will cost to operate.

At Data Center Frontier, we talk the industry talk and walk the industry walk. In that spirit, DCF Staff members may occasionally use AI tools to assist with content. Elements of this article were created with help from OpenAI's GPT5.

Keep pace with the fast-moving world of data centers and cloud computing by connecting with Data Center Frontier on LinkedIn, following us on X/Twitter and Facebook, as well as on BlueSky, and signing up for our weekly newsletters using the form below.

About the Author

Matt Vincent

Matt Vincent is Editor in Chief of Data Center Frontier, where he leads editorial strategy and coverage focused on the infrastructure powering cloud computing, artificial intelligence, and the digital economy. A veteran B2B technology journalist with more than two decades of experience, Vincent specializes in the intersection of data centers, power, cooling, and emerging AI-era infrastructure. Since assuming the EIC role in 2023, he has helped guide Data Center Frontier’s coverage of the industry’s transition into the gigawatt-scale AI era, with a focus on hyperscale development, behind-the-meter power strategies, liquid cooling architectures, and the evolving energy demands of high-density compute, while working closely with the Digital Infrastructure Group at Endeavor Business Media to expand the brand’s analytical and multimedia footprint. Vincent also hosts The Data Center Frontier Show podcast, where he interviews industry leaders across hyperscale, colocation, utilities, and the data center supply chain to examine the technologies and business models reshaping digital infrastructure. Since its inception he serves as Head of Content for the Data Center Frontier Trends Summit. Before becoming Editor in Chief, he served in multiple senior editorial roles across Endeavor Business Media’s digital infrastructure portfolio, with coverage spanning data centers and hyperscale infrastructure, structured cabling and networking, telecom and datacom, IP physical security, and wireless and Pro AV markets. He began his career in 2005 within PennWell’s Advanced Technology Division and later held senior editorial positions supporting brands such as Cabling Installation & Maintenance, Lightwave Online, Broadband Technology Report, and Smart Buildings Technology. Vincent is a frequent moderator, interviewer, and keynote speaker at industry events including the HPC Forum, where he delivers forward-looking analysis on how AI and high-performance computing are reshaping digital infrastructure. He graduated with honors from Indiana University Bloomington with a B.A. in English Literature and Creative Writing and lives in southern New Hampshire with his family, remaining an active musician in his spare time.