Seeing Servers Get Cool: AI Infrastructure in Action

We conclude our article series on how data center leaders are rethinking cooling strategies, embracing modularity, and preparing their facilities to support AI at scale with confidence and resilience. This week, we’ll move from design philosophy to execution, examining how these principles are applied in real AI deployments where hybrid cooling systems and modular architectures translate directly into measurable gains in efficiency, uptime, and hardware longevity.

Let’s start with a very important statement:

The transition to AI-native infrastructure is no longer theoretical. It is being implemented through coordinated partnerships that align silicon, power, and cooling into unified architectures.

The 100 MW AI reference blueprint demonstrates how hyperscale AI facilities can be purpose-built to support next-generation platforms such as NVIDIA DGX SuperPOD systems with DGX GB200 configurations.

This blueprint is the result of collaboration between NVIDIA, Siemens, and nVent. Together, these organizations developed a Tier III-capable, modular architecture designed specifically for large-scale liquid-cooled AI deployments.

Deployment Profile

- Environment: Large-scale AI data center campus

- Use Case: AI training and inference using next-generation NVIDIA GPU platforms

- Scale: Modular deployment supporting up to 100 MW of IT load through repeatable AI pods

The challenge addressed by this design is increasingly common. As GPU density rises, traditional air-based cooling architectures become a limiting factor. Supporting advanced AI systems requires far higher rack-level power while maintaining efficiency, uptime, and serviceability. Simply scaling legacy cooling approaches is no longer sufficient.

The Challenge

Operators pursuing hyperscale AI deployments face converging pressures:

- Rack-level power densities approaching and exceeding 100 kW

- Rapid demand for AI compute capacity

- Increased complexity in power distribution and cooling integration

- Sustainability requirements alongside performance goals

Traditional air-cooled architectures cannot support these densities efficiently. At the same time, deploying liquid cooling without coordinated power and automation infrastructure introduces operational risk.

The challenge is not simply removing heat. It is orchestrating power, cooling, monitoring, and automation into a deployable, resilient system.

The Architecture and Approach

The solution described in the Blueprint is built around a liquid-first cooling strategy supported by modular power and thermal infrastructure.

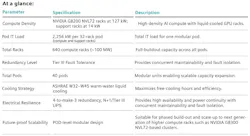

From the Blueprint’s At a Glance summary:

- Purpose-built for 100 MW hyperscale AI campuses

- Designed to house NVIDIA DGX SuperPOD systems

- Tier III-capable architecture

- Modular and fault-tolerant by design

- Optimized for energy efficiency and scalability

Key architectural elements include:

- Direct-to-chip liquid cooling removes more than 80 percent of rack heat

- Modular CDUs support approximately 1.6 MW of liquid heat removal per AI pod

- Each pod supports roughly 2 MW of IT load

- Residual air cooling handles 20 to 25 kW per rack within a controlled airflow environment

- Individual racks approach 127 kW while remaining serviceable and operationally stable

nVent provides the modular liquid cooling backbone, engineered for repeatability and serviceability at pod scale. Siemens integrates industrial-grade electrical systems, medium and low voltage distribution, automation platforms, and energy management software. NVIDIA defines the compute platform architecture and thermal envelope requirements.

The result is not a collection of independent components, but a unified system engineered for deployability.

Outcomes and Impact

The integrated reference architecture delivers more than incremental efficiency gains. It establishes a scalable operating model for hyperscale AI infrastructure.

By combining NVIDIA’s AI platform requirements with nVent’s liquid cooling systems and Siemens’ industrial-grade electrical and automation technologies, the architecture creates a tightly coordinated thermal and power ecosystem. This alignment enables:

- Accelerated time-to-compute, through modular pod-based deployment that reduces engineering ambiguity and shortens commissioning timelines

- Higher tokens-per-watt performance, achieved by optimizing thermal efficiency at the chip level while minimizing parasitic cooling overhead

- Lower PUE and improved energy efficiency, driven by liquid-first heat removal and intelligent power management

- Tier III-capable resiliency, integrating fault-tolerant power and cooling strategies that support uptime expectations for mission-critical AI workloads

- Sustainability alignment, through advanced energy monitoring, load optimization, and reduced cooling-related power consumption

Real-time monitoring and integrated automation play a critical role. By aligning electrical infrastructure, cooling distribution, and system-level telemetry, operators gain visibility into both power and thermal performance at granular levels. This allows dynamic optimization of energy usage and thermal balance, reducing inefficiencies before they escalate into operational risk.

The broader operational impact is clear. When compute, power, and cooling are engineered as a unified architecture rather than independent layers, AI infrastructure becomes not only denser but also more deployable, resilient, and economically sustainable at scale.

Why This Matters

This case study brings the central theme of the paper into focus. AI infrastructure is no longer about adding capacity in isolation. It requires coordination across the value chain, from silicon vendors to cooling innovators to power and automation specialists.

The collaboration between NVIDIA, Siemens, and nVent illustrates what happens when cooling, power, and compute are designed together from first principles. Liquid cooling enables the density. Industrial-grade power systems enable resilience. Modular architecture enables speed and scalability.

As AI workloads continue to expand, the blueprint offers a practical model for building future-ready facilities that balance performance, sustainability, and operational confidence.

Download the full report, Power, Cooling, and Bravery: Designing Data Centers for the AI Age, featuring nVent, for exclusive content, including thoughts on how to build the AI factory with confidence.

About the Author